Age Assurance Technologies for today’s consumers mean that the web isn’t novel anymore. Just like automakers had to learn to make cars safer and more reliable in the late 20th century, web platforms need to cater to digital natives with higher expectations of both privacy and safety.

As many as one-third of all internet users around the world are children, and they use internet tools and apps for schoolwork, socializing, playing games, and safely exploring the world. Parents have a reasonable expectation for their children’s safety online, but that often does not line up with how all these apps are used. Consider: In the pre-digital era (or even the pre-app era), parents had the ultimate control over most of the content children engaged with simply because the adults purchased those assets individually (televisions, cable subscriptions, game consoles). Adults were the ultimate gatekeepers.

Now, protecting minors online is much more of a balancing act; and the commercial realities simply do not favor privacy. The current business model of most social media apps, for example, is highly targeted advertising. Usage data is their literal currency, and that includes the behavior of minor users.

Which means that parents, for their part, are increasingly concerned about disclosing their children’s personal information. Those concerns often sit in direct conflict with the needs of online service providers and social media apps under increasing regulatory pressure to age-gate content and verify users.

And while that regulatory environment favors protecting children online, those same children are likely to flex their independence digitally without their parents’ knowledge (let alone permission). Put more bluntly: Kids know that they won’t be able to access certain sites if they are under age. So when asked their age, they fib—rendering self declaration useless.

So what can technology do to enforce guardrails for children that allow them to explore the digital universe safely, and not ensnare apps and tech firms in more regulatory red tape?

The Challenges of Age Assurance Technologies

Enterprise and service providers simply face a different challenge today than they did in the late 20th and early 21st centuries. Consumers were accustomed to high barriers of entry for both content (cable television for example) and products. Put simply: We were all accustomed to processes and paperwork that included KYC verification for opening bank accounts, getting approved for financing, and so forth.

Digital platforms were not created with the assumption that either age verification or KYC processes would ever become a necessity. The digital landscape is changing, however, and existing age verification processes today mirror the expectations and behaviors of a pre-digital audience. Further, digital platforms have done little to earn the trust and deliver the value that today’s digital natives (Millennials and Gen Z) demand.

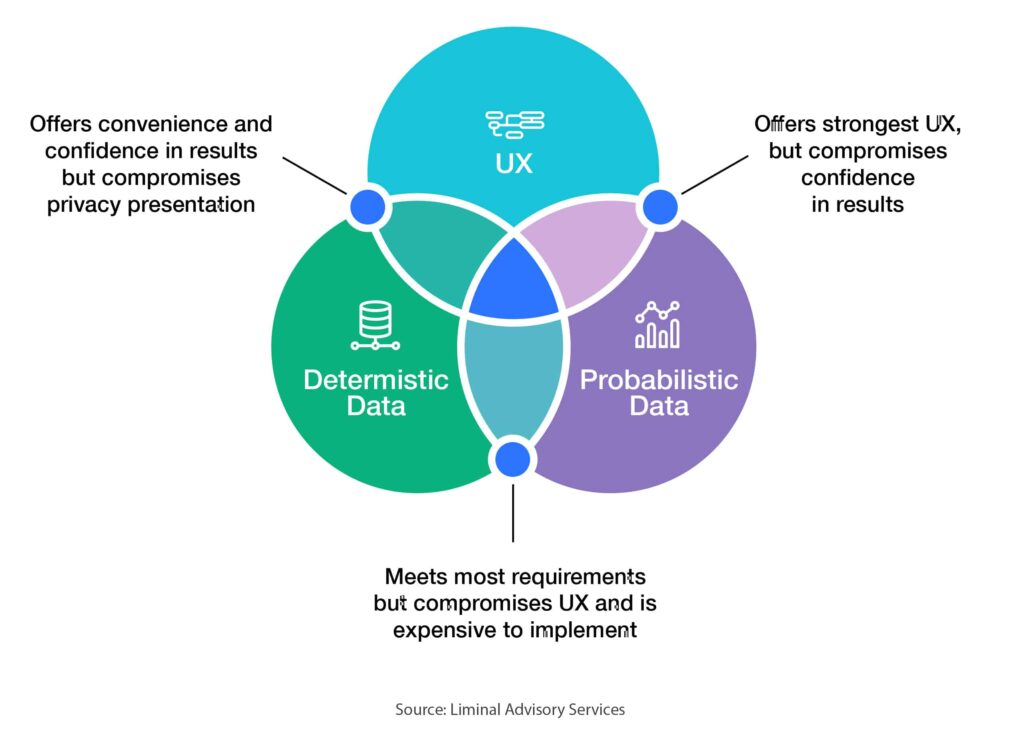

Age assurance systems still sit in the middle of an ongoing tug of war between efficacy and privacy. Technology that errs on the side of privacy tends to be inaccurate. There are various innovations in AI that are extremely effective but at the same time could give third-party access to minor data in a way that is both troubling for parents and in conflict with the law.

In an ideal world, age assurance processes would meet user convenience expectations, age verification , and privacy protection. The reality: Today, providers have to settle for two and are unlikely to get all three.

Solutions for age verification pose a challenging balancing act for tech providers. The easier the process for the user, the less valuable it is for the provider. The available technologies that have a better success rate for providers ask for more personal information, much of which can be stored within the app itself. In short: What’s convenient for the user today isn’t adequate for the service provider.

Existing Age Assurance Technologies

Age assurance tools need to satisfy what are widely discussed as “the Four C’s” of risk for children online: content, contact, conduct and contract.

- Content: Age assurance tools can demonstrate if users are 18 or older to block adult/harmful content.

- Contact: Apps can flag adult users in platforms that allow users of all ages, in order to block adults from interacting with children (and vice versa).

- Conduct: Tools can reduce the risk of younger children being unduly coerced or influenced by older children and adults while allowing similarly aged peer groups to interact freely.

- Contract: In many countries, platforms and companies may not collect data from children younger than 13. However, those limits don’t apply to children ages 13-18. Advertising-driven platforms could benefit from age assurance tools that make children older than 13 eligible for data collection and targeted advertising.

As age assurance and identity technologies undergo an innovation and investment renaissance, the digital domain is still in need of consistent technologies that reliably answer the question, “How old are you?”

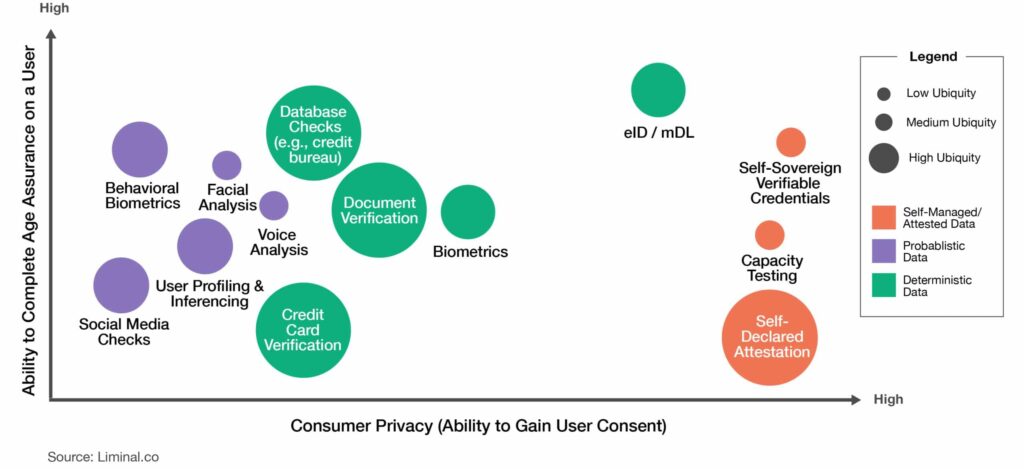

Self Declaration

Precisely as it’s described, self declaration is the lowest friction point of entry for users who are simply asked to provide their own date of birth, or birth year.

PROS:

- Low friction

- Simple UX

- Fast and convenient

- Favors user privacy

CONS:

- Easy for kids/users to lie

- Difficult/impossible for providers to verify

Database Checks

Database checks rely on third-party collected data (driver’s license, passport, other forms of publicly issued identification). The obvious advantage is that the verification of the individual already has been vetted by a third party (a credit card account that is only issued to 18 or over people, etc).

PROS:

- Difficult to forge documents

- Credible data available today from third-party brokers

CONS:

- Storing data violates several international laws (COPPA and GDPR)

- Children without access to those payment resources (like a credit card) can’t access tools or apps

- Not necessarily fraud resistant (non-authorized users can use stolen identities to open accounts, etc.)

Biometrics

Biometrics is a relatively new technology and uses various facial, vocal, or physical (e.g., fingerprints) attributes to access data. Most users are familiar with facial recognition technology from cell phones and so forth. While it’s a proven technology for unlocking devices, it has limited application for age verification.

PROS:

- Easy to operate and use

- Need for personal data collection

- Could use device intelligence to verify user

CONS:

- Lack of user trust: A July 2021 Liminal survey found that a quarter of U.S. consumers never use biometrics on their smartphones—the reason: privacy concerns

- Most biometrics use stored data that could violate international laws designed to protect minors

- High error rate for facial analysis of children

Behavioral Biometrics

In its infancy, AI technologies for this use case capture features related to physical movement and characteristics. The collected data infers a pattern of behavior that belongs to a specific individual. In terms of age assurance applications, its usage is still in its infancy.

PROS:

- Unlike traditional biometrics, it satisfies privacy concerns

- Personal data is not exposed during the process

CONS:

- Could already be in violation of consumer protection laws

- Parents may not want their children’s personal behavior monitored or collected

- Big Tech’s well-documented missteps could negatively impact the growth of biometrics

Profiling

Profiling data is pulled from existing information that users already have chosen to share about themselves, and combined with information inferred about them, including: Time spent on a web page, interests, friends, and location information. Profiling service providers have been used for commercial advertising as of today, but data granularity may be sufficient for an age estimation. If used in combination with other verification tools, it could help build a reliable profile of an individual’s age.

PROS:

- Does not require user input

- Providers have reasonably accurate data

- Profiling is mature and growing rapidly, thanks to AI and ML

CONS:

- Profiling creates significant tension between users and apps

- Could collect information beyond what is needed for age verification

- High risks for children being targeted with inappropriate content

Capacity Testing

Similar to the now-familiar CAPTCHA tests, capacity testing estimates a user’s age based on their capacity to complete a test (language tests, puzzles, etc.).

PROS:

- Highly private

- Requires little user interaction

- Gamifies user verification to reduce friction/make the process fun

CONS:

- Not all kids of the same/similar age are at developmental or intellectual parity

- Children with language or intellectual skill challenges could be blocked incorrectly from products and services

Electronic Identity (eID)

eIDs are electronic identifiers of an individual’s real-world identity. Once it’s established (using a range of data points, including name, DOB, address, etc.), those identifiers could be stored within a digital storage wallet and controlled by the end-user. Age assurance poses an interesting but conflicted use case for eIDs.

PROS:

- Highly accurate

- Secure

- Low friction on the user end

CONS:

- Opportunities for interoperability among providers remain unclear

- Nascent technology

- Additional verification needed from third parties poses security risks

Self-Sovereign Verifiable Credentials

Self-sovereign identity (SSI) is generally defined as end-user identification tokens that can be distributed and retracted as needed. It’s seen as a very democratized approach that values an individual’s control over their digital identity. Today, this is viewed as a philosophical approach to identity and age verification and has not been widely adopted.

PROS:

- Enables consent on the user side for how and how long their identity is used by a third party

- Relatively frictionless for the user

- High level of user trust

CONS:

- Untested in the enterprise

- Still in its nascency

- Relies on self-attested data (not reliable)

- Could prove to be inefficient to law enforcement and regulators

Consumer and Child Protection Over Profits

To build a stable digital future, providers need to invest in interoperable technologies and standards that help regain user trust and protect privacy and user identity. Age assurance is a defining issue of today’s internet users, platforms, and content creators.

If age assurance technologies are well implemented, the industry could introduce an era defined by trust. By fusing digital and physical domains to create more reliable digital identities, we could:

- Usher digital domains built on safety and trust

- Build platforms for a more inclusive and intersectional audience

- Reduce online fraud

- Build a more civic-minded society

It is incumbent upon all stakeholders to strive for both interoperability of new digital technologies and an internet that is safe and fun for children to explore, learn, develop, and communicate.

In the meantime, the strategy is to implement the technologies that are best for each use case. Liminal has strong expertise in this area, and has advised many clients on which technologies would be appropriate for their situation(s). If this is something your organization is interested in pursuing, contact us.